IAB AI Transparency & Disclosure Framework: Complete Guide 2026

The IAB AI Transparency & Disclosure Framework (January 2026) is a voluntary industry standard that tells advertisers, agencies, and ad tech platforms when AI-generated or AI-modified ad content requires a consumer-facing disclosure label — and exactly what that label must say. It covers five content types (images, video, audio, synthetic influencers, and text) and uses a three-part materiality test to determine if disclosure is required: Deception Potential, Material Impact, and Expectation Alignment. It also defines custom C2PA metadata assertions (com.iab.threshold, com.iab.disclosure) for machine-readable compliance signalling across DSPs, SSPs, and publishers.

Table of Contents

- What Is the IAB AI Transparency & Disclosure Framework?

- Why the IAB Created the AI Transparency Framework (January 2026)

- The Three Disclosure Checks

- Content-Type Rules: Images, Video, Audio, Synthetic Influencers, Text

- What Are the Official IAB AI Disclosure Labels for 2026?

- How Does C2PA Automate IAB AI Disclosure Compliance?

- Which Laws Mandate AI Disclosure in Advertising? (2026 Guide)

- The IAB Materiality Checker — Try It Free

- Who is Responsible for AI Disclosure Compliance?

- How to Implement the IAB AI Disclosure Framework: A 6-Step Guide

- Frequently Asked Questions

- Related Reading

1. What Is the IAB AI Transparency & Disclosure Framework?

The IAB AI Transparency and Disclosure Framework is an industry standard published by the Interactive Advertising Bureau (IAB) in January 2026. It is the advertising industry's most comprehensive attempt to answer one question that every brand, agency, and creative team is now asking: When AI creates or modifies ad content, does the consumer need to know?

The framework does not say "always disclose everything AI." Nor does it say "AI is just another production tool, no disclosure needed." It does something more nuanced — and more useful. It defines a materiality threshold: a test that determines whether AI involvement in content creation is significant enough that a reasonable consumer would care if they knew about it. If the answer is yes, disclosure is required and the framework tells you exactly what to say and where to put it.

The IAB is not a regulator. It cannot fine you. But the framework matters enormously for three reasons:

First, it is rapidly becoming the de facto compliance standard that major platforms, DSPs, and publishers are adopting as their enforcement baseline. When Google, Meta, or The Trade Desk decides how to handle AI disclosure requirements at the platform level, they will build toward this framework.

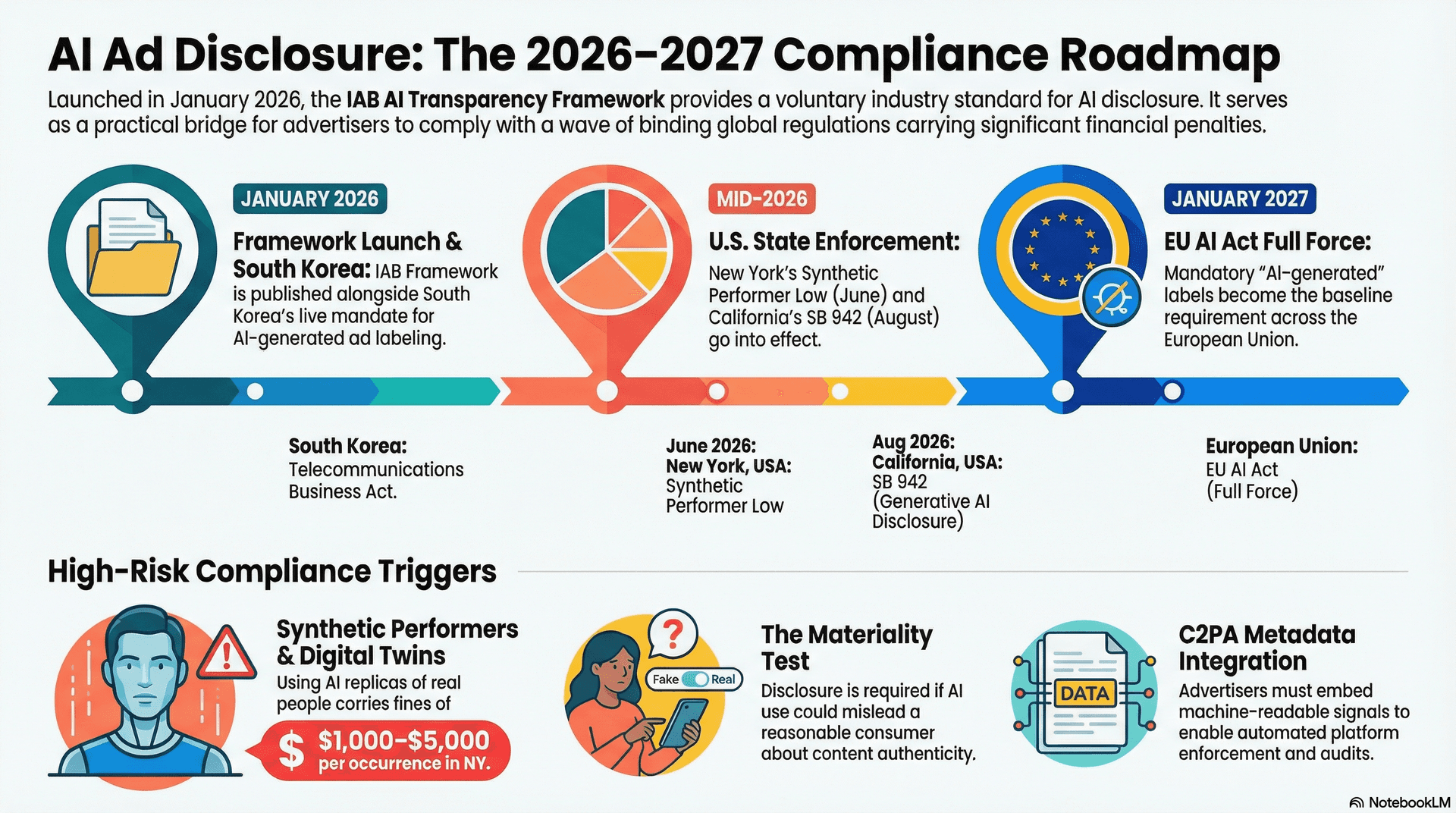

Second, it maps directly onto the disclosure requirements embedded in binding law — particularly the New York Synthetic Performer Law (effective June 2026), California SB 942 (effective August 2026), and the EU AI Act — giving compliance teams a single framework they can use across jurisdictions.

Third, it defines the C2PA custom assertions that ad tech infrastructure uses to signal AI disclosure compliance in machine-readable metadata. If you are building or buying ad tech in 2026, the IAB framework is the specification you are building to.

2. Why the IAB Created the AI Transparency Framework (January 2026)

- The Problem: Fragmented regulations (NY, CA, EU, S. Korea) and eroding consumer trust.

- The Solution: A unified industry standard that harmonizes legal compliance with technical ad-serving infrastructure.

- Key Impact: Moves AI disclosure from a manual "policy check" to a machine-readable technical requirement (C2PA).

AI-generated advertising content went from novelty to mainstream in roughly 18 months. By late 2024, a significant share of digital display creative was being generated or substantially modified by AI tools. By 2025, AI-synthesized voices were appearing in radio and podcast ads at scale.

The advertising industry's existing disclosure frameworks were not built for this. Prior standards assumed that the humans creating advertising content were humans, using human labor. The question of "did AI make this?" simply was not on the regulatory or ethical checklist.

Three things converged to make the IAB move:

Regulatory Pressure

Legislators in NY and CA passed laws requiring AI disclosure, with fines up to $5,000 per violation. EU AI Act and S. Korea laws are already in force.

Consumer Trust

Eroding trust from undisclosed AI imagery and voices directly impacts campaign performance and brand authenticity in the long term.

Platform Enforcement

Major DSPs and exchanges now require technical signals (C2PA) to flag AI creative, forcing a move from simple policy to technical standard.

Regulatory Pressure & Real Financial Strings

The legal landscape is no longer hypothetical. Legislators in New York and California have passed laws with sharp teeth and fines upto $5,000.

The New York Synthetic Performer Law and California SB 942 require clear disclosure of AI-generated performers and content. Suddenly, the legal landscape was fragmenting, and the industry needed a unified standard to navigate it without managing 50 different sets of rules.

The IAB's response was the January 2026 framework: the first industry standard that is simultaneously a decision-making guide, a vocabulary of specific label texts, and a technical specification for C2PA metadata.

3. The Three Disclosure Checks

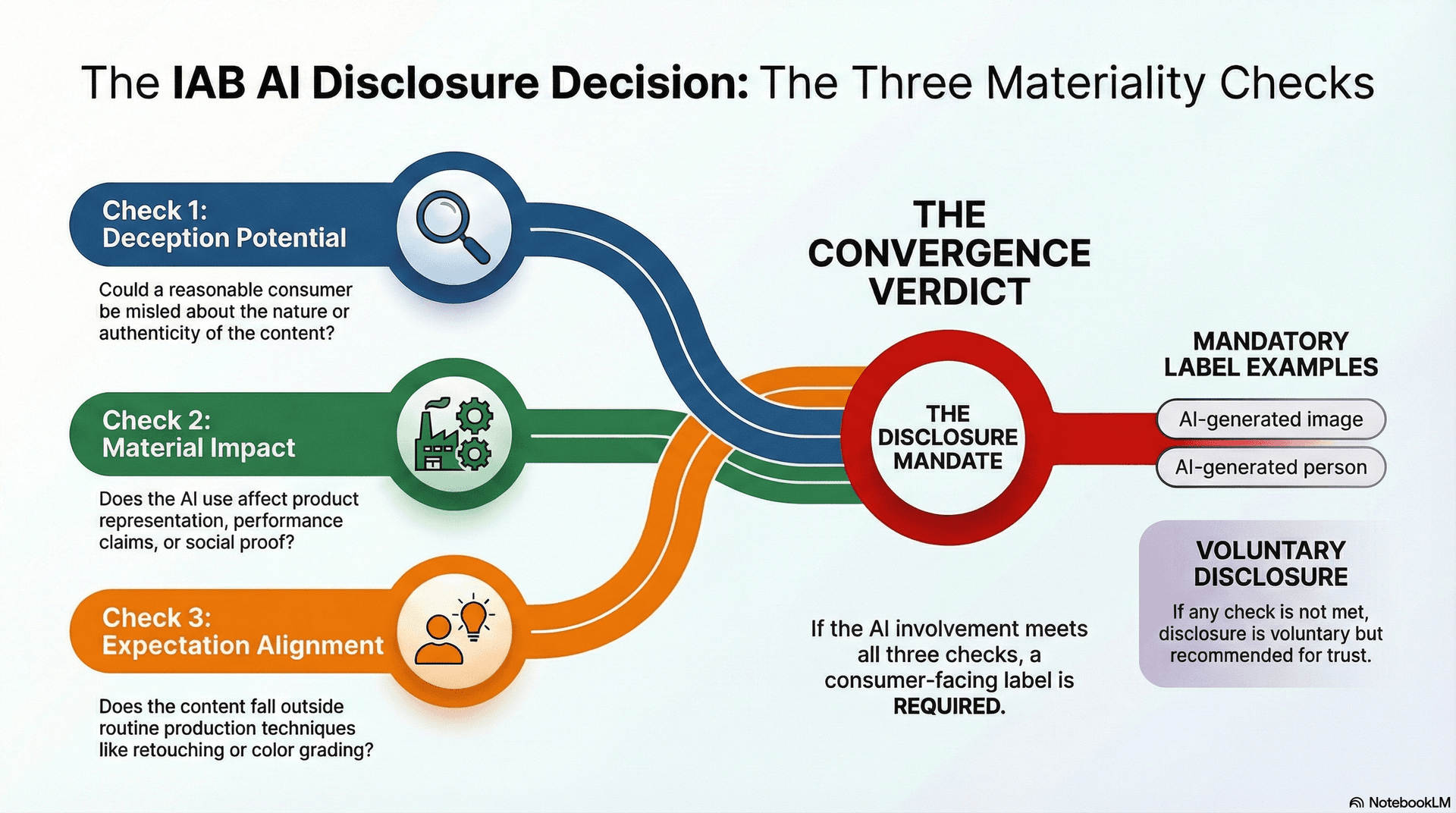

Before requiring a disclosure label, the IAB framework asks three simple yes/no questions. These determine if the AI involvement is significant enough that a consumer needs to know about it. If all three answers are "Yes", a label is required.

The materiality test is intentionally practical — it is designed to let routine AI-assisted production continue without disclosure burdens, while drawing a clear line at the point where AI involvement could actually mislead a consumer about what they are buying or who is endorsing it. Think of the three checks as a filter: most AI post-production work fails Check 1 and never needs a label. Only the cases that clear all three reach the point of required disclosure.

Is it realistic enough to deceive a consumer?

Could a reasonable person be misled about the nature, origin, or authenticity of this content? If it's a clearly fantastical rocket ship or a cartoon, people know it's not real photography.

Continue to Check 2

No disclosure required

Does it materially change what the consumer thinks of the product?

Does the AI change product features, performance claims, or social proof? Retouching a background is fine; making a product look slimmer or using a fake person to endorse it is a material change.

Continue to Check 3

No disclosure required

Is it beyond what people expect from standard production?

Retouching and color grading are routine. AI-generated people who don't exist or deepfakes of real individuals fall outside standard expectations and require disclosure if they appear realistic.

ALL CHECKS PASSED. Disclosure Required.

No disclosure required

It is worth noting what the test is not asking. It does not ask whether AI was involved at all — the framework explicitly accepts that AI tools are now part of the standard creative production stack. It does not ask whether the brand intended to deceive. It asks only whether the output, as a consumer would perceive it, clears these three thresholds. An AI tool used in good faith with no deceptive intent can still produce content that requires a label if the output meets the materiality criteria.

4. Content-Type Rules: Images, Video, Audio, Synthetic Influencers, Text

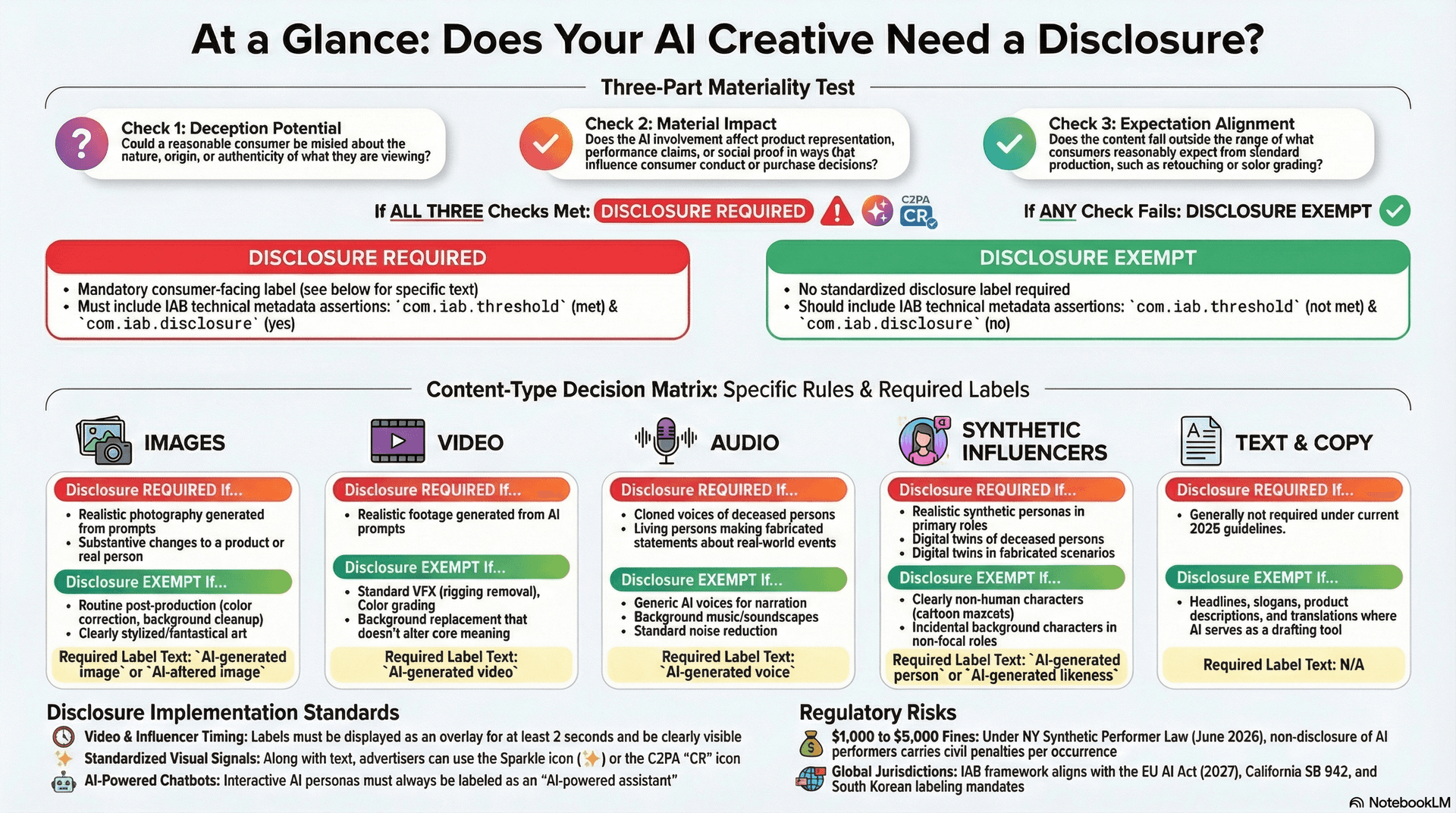

The IAB framework applies the materiality test differently across five content types. Each has specific decision branches, specific exemptions, and specific label texts. Understanding which category your creative falls into is the most important step — the downstream disclosure requirements and legal exposure vary significantly across them.

4.1 Images

The most common application of the framework, because AI image generation is now the default production tool for many creative teams. The key variable is photorealism — not the tool used, not whether the image started from a real photograph.

| Scenario | Status | Disclosure Rule |

|---|---|---|

| Fully Generated | 🔴 Required | If the image looks like a real photograph. |

| Substantive Edit | 🔴 Required | If the product itself or a real person is modified. |

| Stylized / Abstract | 🟢 Exempt | If the artificial nature is obvious (cartoons, 3D art). |

| Routine Edit | 🟢 Exempt | Background cleanup, color correction, blemish removal. |

A photorealistic AI render of a coffee cup on a kitchen counter requires disclosure. A clearly illustrated or 3D-animated version of the same coffee cup does not. The distinction is not aesthetic — it is whether the image could pass for a real photograph in a casual viewing context.

The trickiest edge case is substantive editing of real photography. The framework draws a precise line here: AI changes that alter the appearance of the product itself or of a real person shown in the ad require disclosure. Background-only replacements are generally exempt, provided the new background does not misrepresent where or how the product performs. Removing a cluttered shelf and replacing it with a clean studio backdrop is fine. Adding a beach backdrop to a moisturiser to imply it's water-resistant when it isn't is not.

4.2 Video

Video rules follow image logic but add a timing requirement: when a label is required, it must remain visible for a minimum duration so that consumers actually register it.

| Scenario | Status | Disclosure Rule |

|---|---|---|

| Fully Generated | 🔴 Required | Realistic video with no clear artificial markers. |

| Altered Subject | 🔴 Required | If a real person or product is meaningfully changed. |

| Animated / Stylized | 🟢 Exempt | Clearly 2D or 3D animation. |

| Standard Post-Prod | 🟢 Exempt | Noise reduction, color grading, AI upscaling. |

If disclosure is required, the label must appear for at least 2 seconds at the first appearance of the AI-generated or AI-altered elements — not buried at the end of the spot. A label that flashes for a half-second in a corner position does not satisfy this requirement.

AI upscaling, frame interpolation, and noise reduction are all exempt. These are established post-production techniques that consumers neither expect to see disclosed nor would find material to their understanding of the product. The disclosure obligation activates when AI is generating or altering something a consumer would consider real.

4.3 Audio

Audio rules are built around identity and authenticity, and they carry the sharpest legal exposure of any content type for U.S. advertisers. The framework's audio provisions map directly onto the New York Synthetic Performer Law — the binding legislation with the hardest deadline and the clearest penalty structure.

| Scenario | Status | Disclosure Rule |

|---|---|---|

| Deceased Person | 🔴 Required | ALWAYS required, even with estate authorization. |

| Fabricated Claim | 🔴 Required | Using a real person's voice clone to say unverified things. |

| Generic AI Voice | 🟢 Exempt | Text-to-speech with no intended human likeness. |

| Contracted Clone | 🟢 Exempt | Standard authorized use for scripted commercial copy. |

The deceased performer rule is a hard line with no exceptions. It does not matter whether the estate has consented, whether the estate was paid, or whether the use is respectful of the performer's legacy. Any AI-synthesized voice of a deceased person in a commercial context requires disclosure. This is one of the clearest and most consistently enforced provisions across all jurisdictions — IAB, New York, California, and the EU are aligned on this.

For living persons, the framework introduces a context dependency: authorized voice clones used for scripted commercial copy are generally exempt. The line is crossed when the voice clone is used to generate statements about real-world facts, fabricate endorsements, or create impressions that the person holds views they have not expressed.

Since audio ads often have no visual component, the framework specifies that disclosure must appear in the ad metadata and in any visible companion banner or player interface associated with the ad. For purely audio-only contexts (smart speakers, radio), disclosure in the spoken content or a clear spoken cue is required.

The New York Synthetic Performer Law takes effect in June 2026 and carries civil penalties of $1,000–$5,000 per occurrence for undisclosed use of AI-synthesized voices of real individuals. This applies to both living and deceased performers. Any agency running audio campaigns in New York markets Needs a disclosure review in their production workflow before that date.

4.4 Synthetic Influencers

This is the highest-risk category for regulatory fines across both NY and California, and the one where brands and agencies are most likely to have compliance gaps they are not aware of. The category is broad — it covers digital twins of real people, fully synthetic AI personas, and interactive AI chatbots appearing in ads.

| Scenario | Status | Disclosure Rule |

|---|---|---|

| Deceased Twin | 🔴 Required | ALWAYS required. |

| Realistic Persona | 🔴 Required | If they appear as a primary brand endorser. |

| Interactive Bot | 🔴 Required | Must state: "AI-powered assistant." |

| Stylized Avatar | 🟢 Exempt | Clearly non-human avatars or low-fidelity characters. |

The digital twin rules for living persons introduce an authorization question that the other content types do not. Using an AI-generated likeness of a real, living person without their explicit authorization is not just a disclosure failure — it is likely a violation of that person's personality rights and potentially a direct breach of the New York Synthetic Performer Law. Authorization is necessary but not sufficient: even with authorization, placing a digital twin of a living person in fabricated events or locations they never visited requires a disclosure label.

Fully synthetic personas — AI-generated people who have never existed — require disclosure if they are realistic in appearance and appear in a primary endorsement role. An AI-generated person in the background of a lifestyle shot is incidental and does not require disclosure. That same AI-generated person speaking directly to camera about a product does. The framework's "primary vs. incidental" distinction is the key variable here.

Not sure if yours qualifies?Does My AI Ad Need a Disclosure Label?If you're now uncertain whether a synthetic persona in your current campaigns needs a label, the Materiality Checker gives you a verdict on your specific creative in under 90 seconds.4.5 Text and Copy

The most lenient category in the current framework, and the one most likely to evolve as AI-generated content becomes more sophisticated.

| Scenario | Status | Disclosure Rule |

|---|---|---|

| Standard Copy | 🟢 Exempt | AI-written headlines, emails, or body text. |

| Testimonials | 🔴 Required | Note: Fabricated AI reviews are deceptive per the FTC. |

The framework's reasoning for exempting AI-written copy is grounded in consumer expectations: advertising copy has always been written by professionals using whatever tools were available, and the use of AI in that process does not change what the consumer learns about the product. A headline is a headline — the production method does not affect the consumer's understanding of the claim.

The testimonial exception is important and sits slightly outside the IAB framework proper. Fabricated AI-generated testimonials — fake reviews, manufactured customer quotes — fall under the FTC's general deception standards regardless of whether the IAB framework would otherwise require a label. The IAB framework does not need to address this directly because broader consumer protection law already does.

The framework notes that text disclosure requirements may be revisited as AI-generated testimonials and synthetic social proof become more prevalent. Treat the current text exemption as stable for 2026 but watch for updates.

5. What Are the Official IAB AI Disclosure Labels for 2026?

The framework is prescriptive about both what the label must say and where and for how long it must appear. Standardised label text is not stylistic preference — it is what allows consumers to recognise the labels across different ad contexts, and what allows platforms to detect and enforce compliance programmatically.

The Complete IAB Label Reference Table

The table below maps every content type that requires disclosure to its IAB-specified label text, required placement method, and duration. Click any label text to copy it exactly.

Source: IAB AI Transparency and Disclosure Framework, January 2026, pp. 18–19. Table recreated with additional practical notes.

| Content Type | Required Label | Placement & Duration | Practical Note |

|---|---|---|---|

| Synthetic Image | Near the image, clearly visible | No minimum duration — static placement. Sparkle icon (✨) or C2PA CR icon are accepted alternatives. | |

| AI-Altered Image | Near the image, clearly visible | Applies when the product itself or a real person in the photo has been substantively modified. Background-only changes are exempt. | |

| Synthetic Video | First frame → persists for the entire video | The label cannot be shown briefly then removed. It must remain on screen for the full duration of consumer exposure to AI-generated content. | |

| Synthetic Voice — Deceased Person | Spoken verbal disclosure, before or after AI segment | Required even with estate authorisation. In audio-only contexts: spoken disclosure must be repeated at least once if the ad exceeds 60 seconds. | |

| Synthetic Voice — Living Person, Fabricated Statements | Audio: verbal pre- or post-content. Video: text overlay | Applies when a voice clone makes unverified statements about real-world facts. Standard scripted commercial use with authorisation is exempt. In audio-only contexts: same spoken verbal disclosure rules apply — pre/post placement, and repeated at least once if the ad exceeds 60 seconds. | |

| Synthetic Avatar | First frame → persists throughout synthetic avatar use | A fully AI-generated person who could be mistaken for a real human. If in the ad for its full duration, the label is present for its full duration. | |

| Digital Twin — Deceased Person | First frame → persists throughout use | Always required regardless of estate authorisation or nature of content depicted. | |

| Digital Twin — Living Person, Fabricated Events | First frame → persists throughout use | Applies to authorised AI replicas shown in scenarios that never occurred. Standard authorised endorsement is exempt. | |

| AI Chatbot / Conversational Agent | At initiation → accessible throughout entire ad experience | Must be disclosed before the consumer begins the interaction. Cannot be buried in small print or a terms link. |

Three Placement Rules That Most Teams Get Wrong

The framework does not specify a minimum number of seconds. It specifies that the label must remain visible for the entire duration of the video. Many creative teams assume a brief opening disclosure satisfies the requirement — it does not. If the AI-generated content is on screen, the label must be on screen.

For audio-only contexts where no visual interface exists — radio, podcast feeds, smart speaker ads — a visible label in metadata is not sufficient. The framework requires a spoken verbal disclosure in the same language as the advertisement, delivered at a normal conversational pace. If the ad runs longer than 60 seconds, that verbal disclosure must be made at least twice. Position it before or immediately after the AI-generated segment — not as an end-of-spot afterthought.

The disclosure must appear at the moment the consumer begins interacting with the AI — not after they have already engaged. An AI-powered interactive ad unit that reveals its AI nature only in a post-interaction screen does not satisfy this requirement.

Label Format Alternatives

The framework accepts three disclosure formats for visual content types. Text labels are the primary method and the recommended default. Two alternatives are permitted when text labels would materially compromise advertising effectiveness.

Label Format Alternatives — Visual Preview

Emerging cross-platform convention. Must include accessible alt text for screen readers (e.g., alt="AI-generated content"). Accepted by several major platforms in lieu of text.

The most future-proof option. Simultaneously provides consumer-facing disclosure and machine-readable compliance via embedded C2PA assertions (com.iab.threshold, com.iab.disclosure).

The recommended default for all content types. Use the exact IAB-specified wording — do not paraphrase. The label must be in the same language as the advertisement.

Platform-applied indicators — such as Meta's "AI info" label — satisfy the IAB requirement when applied automatically based on C2PA metadata, provided they meet IAB conspicuousness standards. The advertiser remains accountable for verifying the platform has actually applied the label. Relying on platform enforcement without that verification is not a compliance strategy.

- Synthetic video requires a label that persists for the entire video — not just the first two seconds.

- AI audio of any real person (living or deceased) requires a spoken verbal disclosure in the same language as the ad.

- The 60-second rule: For audio-only ads over 60 seconds, the verbal disclosure must be made at least twice.

- Chatbot / conversational agents must disclose at the moment of initiation — not in fine print or after engagement.

- All visible labels must meet IAB conspicuousness standards — small print does not qualify.

6. How Does C2PA Automate IAB AI Disclosure Compliance?

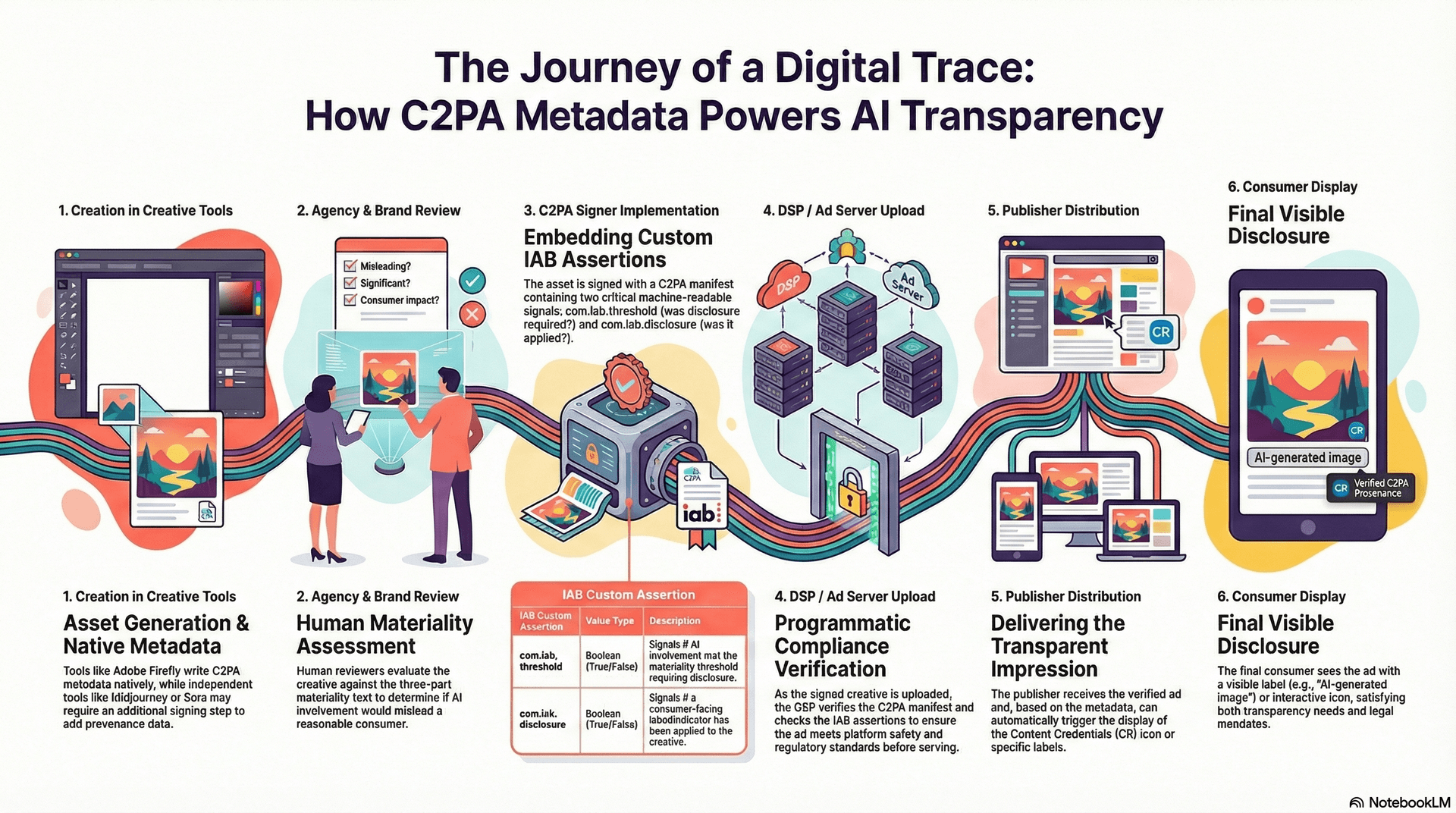

C2PA moves compliance from a "trust-based" model to a "verification-based" model. By providing cryptographic provenance, the framework enables ad-tech interoperability and programmatic enforcement at scale. Creative assets no longer just say they are compliant; they prove it with a cryptographically signed manifest.

One of the most technically significant aspects of the IAB framework is its integration with the Coalition for Content Provenance and Authenticity (C2PA) standard. C2PA is a technical specification for cryptographically signing digital content to establish its provenance — where it came from, what tools were used to create it, and whether it has been altered since publication.

The Custom IAB Assertions

The IAB framework defines two custom C2PA assertions specific to advertising:

com.iab.threshold— a machine-readable signal indicating whether the AI involvement in this creative has met the IAB materiality disclosure threshold (true/false)com.iab.disclosure— a machine-readable signal indicating whether consumer-facing disclosure has been applied (true/false)

These assertions, embedded in a C2PA Content Credentials manifest attached to the ad creative file, allow DSPs, SSPs, and publishers to programmatically verify compliance at the point of impression.

Which Tools Already Write C2PA?

This is a practical question with an uneven answer. As of early 2026:

Tools that write C2PA metadata natively: Adobe Firefly (and the broader Adobe ecosystem via Content Credentials), Leica cameras, certain Microsoft Designer outputs, and a growing number of stock photo platforms.

Tools that do not write C2PA natively (yet): Midjourney, Runway, Sora, ElevenLabs, and most other independent AI creative tools. Content created with these tools requires a separate C2PA signing step to achieve machine-readable compliance.

The practical implication: if your creative workflow relies on tools that do not natively write C2PA, you need a process to add C2PA metadata before the asset enters your ad serving workflow. The IAB framework's adoption of C2PA creates a market signal that will accelerate tool adoption — but in 2026, workflow gaps are common.

7. Which Laws Mandate AI Disclosure in Advertising? (2026 Guide)

While the IAB framework is voluntary, the laws it maps to are binding. Complying with the IAB standard is the most effective way to satisfy multiple, overlapping regional laws (NY, CA, SK, EU) through a single technical workflow.

Global Comparison Table: AI Disclosure Requirements

| Jurisdiction | Law / Regulation | Enforcement Status | Primary Focus |

|---|---|---|---|

| New York | Synthetic Performer Law | June 2026 | AI replicas of real people (Voice/Likeness) |

| California | SB 942 | August 2026 | Broad AI-generated content in commerce |

| South Korea | Telecom Business Act | Active Now | All AI-generated advertising content |

| European Union | EU AI Act | Jan 2027 | High-risk AI & synthetic media |

New York: Synthetic Performer Law (Effective June 2026)

New York's law is the most specific and most immediately consequential for U.S. advertisers. It requires disclosure when an advertiser uses a "synthetic performer" — an AI replica of a real person's voice, likeness, or performance — in a commercial context. The definition of "synthetic performer" maps closely to the IAB framework's synthetic influencer and audio categories.

Violations carry civil penalties of $1,000 to $5,000 per occurrence. "Per occurrence" is still being interpreted, but the legal consensus suggests it means per ad unit, per flight — making non-compliance at scale a significant financial risk.

The IAB framework's categories and label texts are designed to be compliant with NY requirements. An advertiser following the IAB framework's synthetic influencer and audio rules should be meeting New York's requirements.

Urgent: June 2026 deadlineNY Synthetic Performer Law: What Ad Agencies Need to Know Before June 2026Full breakdown of what the NY law requires specifically, how fines are calculated per occurrence, and what a compliant audio workflow looks like before June.California: SB 942 (Effective August 2026)

California's SB 942 requires disclosure of AI-generated content in certain commercial contexts. It is broader in scope than New York's law — applying to a wider range of AI-generated content, not only AI personas — but with somewhat less specificity about what disclosure must look like. Following the IAB framework's label texts satisfies SB 942's requirements for content that falls within both frameworks' scopes.

South Korea: Telecommunications Business Act Amendment

South Korea moved fastest of all the major markets. The amended Telecommunications Business Act, which came into force in early 2026, requires disclosure of AI-generated content in digital advertising. For advertisers running campaigns in South Korea, this is already a live compliance obligation.

EU AI Act

The EU AI Act's provisions are being phased in, with the most relevant commercial obligations coming into full force in 2027. The Act takes a risk-tiered approach and requires disclosure of AI-generated or manipulated media in contexts where it could mislead. The IAB framework is largely compatible with EU requirements, though the EU's obligations also extend into algorithmic transparency in ways the IAB framework does not directly address.

Global coverageAI Ad Disclosure Laws by Country: EU, US, South Korea 2026Full jurisdiction comparison: requirements, effective dates, penalty ranges, and how the IAB framework maps onto each law. Includes a downloadable comparison table.8. The IAB Materiality Checker — Try It Free

Understanding the framework is one thing. Applying it to a specific piece of creative — with its specific content type, specific method of creation, and specific deployment context — requires working through the decision logic.

We built the IAB Materiality Checker to do that in under 90 seconds.

Try the IAB Materiality Checker

The tool does the following:

- Asks you to select your content type (image, video, audio, synthetic influencer, or text)

- Asks the relevant follow-up questions for that content type (creation method, editing scope, voice type, etc.)

- Applies the IAB decision logic to your specific answers

- Returns a verdict: Disclosure Required, Not Required, or Recommended (context-dependent)

- If disclosure is required, gives you the exact IAB label text to use and where to place it

- Shows a jurisdiction breakdown covering IAB, EU AI Act, NY Synthetic Performer Law, California SB 942, and South Korea

9. Who is Responsible for AI Disclosure Compliance?

Compliance is a shared responsibility across the advertising ecosystem. While agencies often handle the technical execution (signing/labeling), the brand holds the ultimate financial and reputational liability for non-disclosure.

Advertisers and Brand Teams

You are ultimately responsible for the content you run. If your creative uses AI-generated imagery, AI-synthesized voices, or synthetic personas, you need to know whether disclosure is required. The practical implication: add an AI disclosure check to your pre-flight creative review process. The Materiality Checker takes under 90 seconds per creative asset.

The larger strategic question is governance: who in your organization knows what AI tools are being used in your creative workflow? Many brands are discovering that their agencies or production vendors have integrated AI tools without formal disclosure, leaving the brand holding liability they didn't know they had.

Agencies and Creative Teams

You are producing AI-assisted content at scale, often faster than your compliance review process can keep up. The framework creates a practical need for AI disclosure to become part of the creative production checklist — not a legal afterthought. Teams using Firefly benefit from C2PA being written natively. Teams using Midjourney or Runway need an additional signing step.

The June 2026 New York deadline makes this urgent for any agency with U.S.-based clients running campaigns in New York. "My client didn't ask me to check" is not a defense.

Ad Tech Platforms — DSPs, SSPs, Ad Servers

The IAB framework is a technical specification, not just a policy guide. DSPs and ad servers that accept AI-generated creative need to build toward C2PA manifest verification and should be building toward automated disclosure label enforcement. The com.iab.threshold and com.iab.disclosure assertions are the signals to read.

Publishers that accept third-party ad creative have a platform-safety interest in ensuring that AI-generated content running on their pages is properly labeled. Advertiser non-compliance creates reputational risk for publishers.

Legal and Compliance Teams

The framework's alignment with binding law makes it the starting point for multi-jurisdiction AI compliance. Using the IAB framework as your compliance baseline reduces the risk of fragmented, jurisdiction-by-jurisdiction interpretations that are more likely to create gaps.

10. How to Implement the IAB AI Disclosure Framework: A 6-Step Guide

A practical six-step checklist for getting your organization IAB-compliant.

Audit AI Tools

Make a list of every AI tool used anywhere in your creative production workflow. Include tools used by vendors, agencies, and freelancers, not just tools your team uses directly

Map to IAB Types

For each tool, identify which of the five content types it generates or modifies. Some tools span multiple types (a video tool that also generates audio, for example).

Materiality Check

Run each active creative through the Materiality Checker.** For any live campaign or upcoming campaign, run each AI-involved creative through the Materiality Checker. Document the results. If disclosure is required and you're not currently disclosing, that's a priority fix.

Apply IAB Labels

Use the exact label text specified in the framework (see Section 5). Follow the placement requirements for the relevant content type. Do not paraphrase or improvise the label text — standardized language is how consumers learn to recognize what the labels mean.

C2PA Metadata Signing

If any of your AI tools do not write C2PA metadata natively, add a C2PA signing step to your production workflow before assets are uploaded to your ad server.

Update Creative SOPs

AI disclosure should become a standard item on your pre-flight creative checklist, alongside brand safety, legal clearance, and accessibility review. The question was AI used, and if so, does it require a disclosure label? should be answered before every campaign goes live.

11. Frequently Asked Questions

12. Related Reading

If this guide raised questions you want to go deeper on, here are the articles in this series that cover each topic in detail.

Does My AI Ad Need a Disclosure Label?

A focused, shareable explainer with the Materiality Checker built in. Good starting point for creative and agency teams.

NY Synthetic Performer Law: What Ad Agencies Need to Know

Covers the specific requirements, the fine structure ($1,000–$5,000 per occurrence), and what compliance actually looks like in practice.

AI Ad Disclosure Laws by Country: EU, US, South Korea 2026

A side-by-side comparison of all major disclosure laws, formatted for compliance teams who need a quick reference across jurisdictions.

What Is C2PA and Why Does It Matter for AI Advertising?

A technical deep-dive on content provenance, the IAB custom assertions, and how ad tech infrastructure is being built around the standard.

AI-Generated Voice in Ads: When Is Disclosure Required?

Covers every audio edge case: generic voices, real persons (living and deceased), voice clones, podcast reads, and radio spots.

How to Add C2PA Metadata to AI Ad Creatives

A practical implementation guide for teams whose AI tools don't write C2PA natively.